DHCPInfo

!DHCPInfo

Although Select had gained the ability to obtain its address by DHCP, it wasn't actually visible anywhere. One of the things that I wanted to do was to have a notification pop up to say that a connection had been established. There wasn't any standard way to do that - Ian and I discussed many ways it could be achieved, and the result was the Alerter protocol, which is discussed in a later ramble. In the meantime, however, I wanted a way to report on the status of the DHCP connection.

I wrote the !DHCPInfo application, which would read information from the DHCP module and display the configuration, but I was never really convinced that this was a great idea. It duplicated the information collected through the Service_StatisticsEnumerate interface and was highly specific to DHCP. I kinda had the idea that it would become a general way to display other info, and that I'd expand the application but I never really got around to it.

ShowStat

*ShowStat itself was a simple tool to display the statistics from the network components. Strictly it can be used to display any statistics, but it was mostly the Internet components which had statistics worthy of display. The statistics enumeration and display was really quite flexible, and had clearly been designed well. It allowed components to report their statistics in a known manner, and to be displayed in different ways depending on the format required. The matter of the statistics collection was always intended to be fast, and the returning of the results should be reliable, even through different iterations of the statistics provider.

Ethernet modules provided statistics through a slightly different interface, but could also provide additional specific information through the statistics format. Initially only the MBufManager module provided extra statistics, but the modules that I created started to introduce more providers, where there were possibly useful statistics to collect.

The DHCP module and ZeroConf were typical examples of providers which produced useful information. The previously mentioned PPP over Ethernet driver could record some useful information which might help with diagnosing issues - or just for interest. RouterDiscovery and ResolverMDNS returned their statistics in the same way as well, although they would mostly be inactive. John Tytgat's AppleTalk module provided a whole host of fields to provide extra information about what the module was doing.

The Resolver module hadn't provided any information, but it seemed sensible to add some statistics, especially as the cache operations were known to be pretty poor. Being able to see the statistics yourself can help to diagnose problems with the module, or its configuration. The Resolver module does offer a way to dump the contents of its cache, but this isn't documented (and I have no recollection of how to do it!) so wasn't as useful.

InternetTime provided some information, but needed a little more support. It, and DHCP, both dealt in time - for their very functioning and for their state machine respectively. Initially I considered just presenting the time fields as simple strings, or as integers (depending on what they represented), but that would make it hard to parse the results of the statistics request in any automatic way. Instead, I extended to the API so that both absolute and relative times could be shown, and the data itself could be supplied in the most common formats - RISC OS 5 byte, Unix epoch time, or system monotonic time. This gave quite a bit of flexibility for the statistics and covered all the common cases.

For DHCP it was also useful to be able to display the addresses of the server which supplied the data and any other server information returned. For these I created a new address statistic type, which could handle Ethernet, IP and Econet address types. In a complete lack of forethought, it didn't handle IPv6 addresses - which is strange as I added support for IPv6 local address negotiation to the PPP over Ethernet driver module, and then had to display the addresses as a bare string. Oh well.

The new types were useful, and pretty simple to document. As the document had never been released properly in the past - and was not part of the DCI 4 documentation that was embargoed - it was include in the Select documentation.

As mentioned above, the idea had been to change the *ShowStat command into an application - or rewrite it as an application - so that it might be easier to track things. We could have had pretty graphs of the state of things over time, or even just tracked results recorded regularly. The volatility indicator in the statistic description would have helped to indicate how often they should be polled, and so on. Never got around to that, sadly.

The tool itself had a couple of minor bugs - the only one I remember is the

problem with one of the strings in the code which displayed the boolean states.

In one case there was a comma missing from an array, which meant that one

of the string values ran on into the next one, producing a string like

"TRUE0" instead of "TRUE" and then "0"

(I don't think that was the actual string, but it was something like that).

Aside from that state printing out wrongly, it meant that every subsequent

pair of states would be effectively inverted. A 0 might display as

"ON" and a 1 as "0" (again, not sure what the

actual strings were, but something like that). Pretty misleading.

The tool itself was covered by a caveat that it was only intended as an

example of how you might use the statistics, so this sort of thing shouldn't

be surprising ![]() .

.

The drivers, too, were not without fault. Some drivers (EtherH springs to mind, but my memory is flakey) would fail to initialise data in the request block, which would mean that random results would be displayed. I added checks for this behaviour to try to ensure that the data returned was either valid or notified the user that the value might be unreliable. So with some hardware you might find warning messages printed beside fields where the results might not be correct.

ShowStat 1.19 (14 Mar 2006): DCI4 statistics gatherer

© ANT Ltd 1999, RISCOS Ltd 2001

Device Driver Information Block

Name : eh

Unit : 0

Address : 00:C0:32:00:88:84

Module : EtherH

Location : Expansion slot 8

Feature flags :

: Multicast reception is supported

: Promiscuous reception is supported

: Interface can receive erroneous packets

: Interface has a hardware address

: Driver can alter interface's hardware address

: Driver supplies standard statistics

Interface type : 10Base2 ethernet

Link Status : Interface okay

Active Status : Interface active

Receive mode : Direct, broadcast and multicast frames

Duplex mode : Full duplex

Polarity : Incorrect

Bytes transmitted : 176

Last TX address :

Received bytes : 119

Last RX address :

Module 'MbufManager' is a supplier titled 'Mbuf Manager'

Mbuf Manager : System wide memory buffer (mbuf) memory management

Active sessions : 4

Sessions opened : 5

Sessions closed : 1

Memory pool size : 262144

Small block size : 128

Large block size : 1536

Mbuf exhaustions : 0

Small mbufs in use : 2

Small mbufs free : 510

Large mbufs in use : 0

Large mbufs free : 128

Module 'ZeroConf' is a supplier titled 'ZeroConf client'

ZeroConf client : Zero-configuration IP address management

Interface : eh0:9

State : Configured

State change : 19:21:03 05-May-2003

Our address : 169.254.91.113

Address change : 19:20:59 05-May-2003

Total collisions : 0

Module 'AppleTalk' is a supplier titled 'AppleTalk Protocol Stack'

AppleTalk Protocol S: AARP, DDP, ZIP, RTMP, NBP, AEP, ATP, PAP, ASP, DSI, AFP

AARP ext lookups : 0

AARP int lookups : 0

AARP int hits : 0

DDP out requests : 5

DDP in datagrams : 0

DDP no prot. handler: 0

DDP too short error : 0

DDP too long error : 0

DDP checksum error : 0

RTMP version mismatc: 0

RTMP in error : 0

ZIP GetNetInfo : 1

ZIP GetNetInfo repli: 0

ZIP zone invalids : 0

NBP in lookups : 0

NBP in replies : 0

NBP out lookups : 0

NBP out replies : 0

AEP in requests : 0

ATP in packets : 0

ATP out packets : 0

ATP request retries : 0

ATP responce retries: 0

ATP release lost : 0

PAP open connection : 0

PAP in data : 0

PAP out data : 0

PAP in close connect: 0

PAP out close connec: 0

ASP in transactions : 0

ASP out transactions: 0

ASP in open sessions: 0

ASP out open session: 0

ASP in close session: 0

ASP out close sessio: 0

DSI in commands : 0

DSI out commands : 0

DSI in open streams : 0

DSI out open streams: 0

DSI in close streams: 0

DSI out close stream: 0

Mbuf failures : 0

Module 'DHCPClient' is a supplier titled 'DHCP client'

DHCP client : Dynamic interface 0

Interface : eh0

State : BOUND

Our address : 192.168.0.201

Server address : 192.168.0.100

Relay addess : No address

Lease period : 30 mins

T1 period : 15 mins

T2 period : 26 mins, 15 secs

DHCP started : 19:20:47 05-May-2003

Lease started : 19:20:51 05-May-2003

Lease ends : 19:50:51 05-May-2003

T1 end : 19:35:51 05-May-2003

T2 end : 19:47:06 05-May-2003

Module 'InternetTime' is a supplier titled 'Internet Time'

Internet Time : Internet time synchronisation client

Servers : 0

Last sync : Unspecified

Last offset : -

NTP requests : 0

NTP responses : 0

Time requests : 0

Time responses : 0

Module 'Resolver' is a supplier titled 'DNS resolver'

DNS resolver : DNS resolver

SWI requests : 2

SWI cache hits : 1

SWI fails : 0

Cache total : 0

Cache active : 0

Cache inactive : 0

Cache pending : 0

Cache expiring : 0

Cache failed : 0

Cache expired : 0

URLFetcher

I re-implemented the URLFetcher module from scratch, which was kinda fun. It let you find out all the evilness and collusion that the HTTP module had in it. The specification hints at a couple of things, but until you implement it you don't realise how bad it is. Things like the private blocks being manipulated by the module to set flags - that threw me completely. I was getting crashes when I was just fetching a page, and it turned out the memory in my module was being corrupted. It was only with a lot of digging that I finally worked out what was going on. It was also pretty disappointing to find out that there was a lot of built in knowledge about URL types in the module.

It had (I believe) been my intention to auto-load Fetchers when a request to access them was made but no fetcher was currently registered. However, it is possible to check the status of URLs of particular types as a general probe, and if the modules were auto-loaded then you would find that they were always loaded at an application's start. Maybe I misremember, but it certainly didn't seem as attractive as I'd hoped.

Proxying was interesting, partly because I found that if you set particular types of proxies with Acorn's module it would subsequently ignore all requests. That was a bit fun to discover. Also, it became clear that the processing of fetched headers and subsequent generation of the flags to indicate the fetch state was down to the protocol module, not the URL fetcher. It makes the URL fetcher simpler, but means that each fetcher has to implement the header processing itself (assuming that it has a concept of headers) to construct the flags. As a client, you have to either rely on the fetcher getting it right, OR re-parse the received data, ignoring the headers - essentially duplicating the work that every fetcher has done.

In my implementation I decided that this was a bit naff, so added the header parsing to the URL fetcher. According to a comment:

/* We want to buffer the headers locally, because the protocol module

may not deliver the body and headers independently. This is not

actually required by the specification, but unfortunately Browse

relies on it. */

In any case, it wasn't that complex to do - just a small state machine to step through the different cases as data arrives. I'd already (long, long before - about 1999) written a DataURLFetcher module, so I had a source I could work from to try out different types of header and body forms.

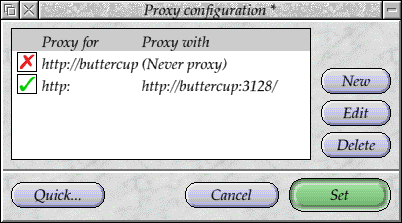

ProxySetup

There was a little !ProxySetup configuration plug-in I wrote so that

the proxies could be configured in a central way for the 'global'

(that is, non-application specific) settings. It showed up problems in

the scrolling list gadget implementation with certain settings.

Or possibly in the Acorn URL fetcher implementation for enumeration

of proxies - I forget. I do remember seeing lots of garbage letters

appearing in the ScrollList which looked very bad. To go with it,

there was a SetProxies command line tool which would parse a simple

text file to set the proxies up - the former configuration tool

generated a script that would invoke the latter on system start up.

![]() The 'Tests' directory has a couple of test programs in it

that try out different things. One of them is MozillaTestURL

which is a build of the command line test tool for fetching URLs

through Mozilla Classic URL fetching library - which, at the

implementation end, I had replaced with calls to the URL Fetcher

module. The test had been created as part of the Mozilla port, and

clearly I'd just dumped a binary in there for trying these things

out.

The 'Tests' directory has a couple of test programs in it

that try out different things. One of them is MozillaTestURL

which is a build of the command line test tool for fetching URLs

through Mozilla Classic URL fetching library - which, at the

implementation end, I had replaced with calls to the URL Fetcher

module. The test had been created as part of the Mozilla port, and

clearly I'd just dumped a binary in there for trying these things

out.

HTTPFetcher

The very, very last thing I did on RISC OS proper - the week before I started at Picsel - was a panic project. "Oh my god, I've not actually written any C code in the past 5 months or so, I'd best try something just so that I'm not learning on the fly and looking a fool." So I implemented a HTTP Fetcher module.

Ok, I wrapped Curl in a HTTP Fetcher module (which isn't really as impressive, but still it did the job), and tried it out - this is where some of the problems like the header parsing got found out, because my module was doing something different.

It worked quite well - most of the time. The main problem was Browse which still seemed to make it upset, even though I'd fixed the workspace pointer collusion. I threw in FTP support as well, as that was really quite trivial after the rest had been done.

Prior to this (quite a bit prior), I'd written an Update library, and bundled this into a Toolbox Object. Essentially it was a window object which could be instantiated to check for the existence of a software update using the URL fetchers. The object was pretty simple to use - you gave it a URL of a file that gave a description file, and the current version of the program. The object would fetch the description file and parse out of it the latest version number of the component and the URL it could be found at. If there was a later version it would offer it for download. It wasn't too complicated - it could do the download for you, as well, or let the application handle that.

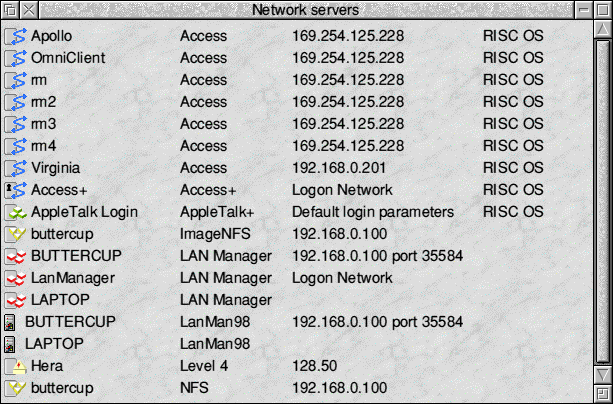

OmniClient

!OmniClient

OmniClient was a pretty well thought out component, and one that I wanted to make a first class stack within the later versions of the Operating System. Essentially it centralised the access to network file systems and print servers, giving a single place to access them from. Fighting against this was the filer, which had been improved during the Select developments. The OmniClient interface was more standardised, and therefore made it a better choice as a front end. It also offered enumeration facilities for the systems it supported, and could easily be extended to add other network filesystems.

NFS

NFS

Whilst we were working on RISC OS 4, we used the ImageNFS client to access out network storage. OmniClient already provided NFS detection as part of the OmniNFS module, so adding support to allow ImageNFS mounting of NFS servers was quite simple to do. This meant that you could see and mount NFS servers with ImageNFS through the OmniClient front end.

The NFS itself wasn't suitable for most uses because of

how it handled untyped files. The general convention that was being used

for file types, and which I intended to apply in most systems, was to use

,xxx at the end of the filename to give the filetype. Untyped

files would have the longer string ,llllllll,eeeeeeee appended

to give the load and execution addresses. These trailing components would

be stripped from the filename so that RISC OS only saw the base name.

Any filenames without these trailing components would be given a standard

type (usually text or data), unless they contained a dot extension

(eg .zip). These extensions would be looked up in MimeMap and

given an associated filetype, but the extension would not be stripped -

it remained part of the filename.

ImageNFS supported all of these, and worked very nicely in the desktop. Viewing the same files on the native file system was easy, and the trailing types made it very easy to see what the intended files were.

NFS on the other hand only supported the ,xxx

format. Irritatingly, the untyped files were handled by appending the

addresses to the end of the file data. This wasn't very nice and I intended

to change to the naming convention instead. The NFS also

implemented 'dead' files differently to other systems. As a special case,

files with load and execution addresses of &DEADDEAD

would be named with the extension ,xxx (literally). This

would be simple to remove when the regular load and execution address code

was added, but I never got around to it.

The NFS module needed to be improved to meet the more demanding requirements of the modern Internet stack. Many of the network modules were happy to just run and be quit with the Internet module, and device drivers present, but I wanted to be sure that the components were resilient in the face of the components being restarted. The NFS module needed to be updated to be able to understand that when the Internet module was quit its connections were severed.

Fortunately, the NFS module was well structured and could cope with the server going away. It was relatively easy to ensure that if the Internet module went away, all the connections were closed and the module went into a state where it would reconnect when needed. The OmniNFS module, which provided the registrations with the front end, was also updated so that the client list was refreshed if the stack was restarted.

LanManFS

LanManFS

The LanManFS module was a constant pain for me. It only handled the 8.3

filenames, which meant that it was more limited than most other file systems

in terms of naming. It handled untyped files differently, using a file

RISCOS.EA which contained the addresses for the files that

were stored. Coupled with the filename length limitations, this restricted

the usefulness of the module significantly.

I began adding support for long filenames, but this didn't progress more than the experimental stages. It was held up mainly because of the feeling that one day the sources might get merged, and the Pace version had already got long filename support - which never happened.

Still, it was functional, and could work over IPX as well as IP. Not that IPX was particularly useful even in those days. Like the NFS module, LanManFS needed some updates to handle the Internet module going away and coming back, and local address changes. It was very useful to have the connection remain alive even when you restart the Internet module during testing.

The machine name would probably change whilst the system was running, due to DHCP address acquisition, and this needed to be handled by LanManFS. LanManFS announced its workstation name, and defended it, over the NetBIOS Name Service protocol. If the hostname changed, services would be sent around and the LanManFS module would release the name it had claimed through NBT (NetBIOS-over-TCP), and announce the new name instead. This meant that other systems (mostly Windows) would be aware of the machine's new name.

The Name handling in LanManFS was one reason why the system would stall when the host name changed. With the above change to register the name with remote servers the system would block whilst the registration was going on, because it didn't expect to be happening in the background. This meant that the machine locked up for about 5 seconds whilst the module registered, or defended, its name. To fix this problem, I made the name handling code asynchronous, happening in the background whilst other things were going on.

This provided the opportunity to expose the names to the rest of the system. The Resolver module was updated so that it would query LanManFS for names when an unqualified name was requested. Because it could happen in the background, the request took very little time and if known, the name was probably in the LanManFS name cache.

The result was that if you tried to use a hostname that was known to LanManFS (over IP) but not to DNS, it would resolve to the correct address - you could use Windows host names directly without any configuration and it would know them. I intended to place a name resolution service above Resolver at some later point, which would know about the providers and use them appropriately, rather than having Resolver know about the other ways of resolving names, which wasn't very modular.

The support for Transact was improved, so that it could be used

to send 'WinPopup' messages to other systems - a feature

which was made redundant in Windows XP with service pack 2, and so was quite

pointless. Ah well, it was nice at the time.

Later versions of Windows had disabled the old password mechanism for connecting to shares, as it was very insecure. The 'MS-CHAP' - Microsoft Challenge Handshake Access Protocol - authentication mechanism, which had been around for quite a few years, became the lowest form of authentication which was acceptable for these systems. By changing registry settings you could disable this requirement and revert to the old insecure methods, but this wasn't really a good solution.

I already had an implementation of the MS-CHAP protocol which I used in the GenericPPP authentication system, so it was pretty simple to drop it into the LanManFS module to allow authentication through the new protocol.

MimeMap support was added to the filename decoding, so that the 3 character extension could be used to determine the filetype. Previously LanManFS had relied on the OmniClient extension mappings. These were in a separate file, controlled by OmniClient, but more complex to deal with and weren't used often in any case. MimeMap provided a more central way of dealing with the extensions.

Access, NetFS

OmniAccess

![]()

OmniNetFiler

The OmniAccess and OmniNetFS modules were also updated in similar ways

to the other enumerator modules. These modules provided 'log on' services and

enumeration of the available networks for the existing and NetFS

modules. They weren't all that interesting, and the only real features

that were added to them were support for their file system modules being

restarted, and for OmniClient itself being restarted.

In many cases the OmniClient protocol modules would kill themselves if they saw the services from OmniClient notifying them that the main module had been quit. It wasn't all that difficult to make sure that the modules shut themselves down into a quiescent state when that happened. Similarly, if they started up without the OmniClient module, they had to remain idle until it started.

Front end

The front end for OmniClient included icons on the IconBar, much like and NetFS, with an icon for each mounted server. OmniClient has the ability to log on to the networks where authentication was required. OmniClient was originally intended to replace and NetFS, and as I wanted it to put in the ROM, it needed to be updated. It would kill off the Filer applications which was a problem. They needed to remain running in order to provide the sharing functions, and initially we would want both OmniClient and Filer to coexist - enough to be switched between.

I modified both Filer and the Filer part of OmniClient so that they

could be configured independently. OmniClient and needed to be

updated so that they could create and remove their IconBar icons. If you

changed OmniClient$Filer to be 'n', the filer

icons would be removed. Similarly, ShareFS$Filer could be set

to disable its Filer.

OmniClient itself had some very badly designed templates, which were easy to update by using the FixUpTemplate tool to report the problems. OmniClient itself was implemented using DeskLib, and there was a lot of legacy cruft within the code. Even simple things like fading out an icon were done oddly, which needed to be fixed before it could be a part of the main ROM. Updating the module to use the core RISC OS version of the library wasn't too hard, though.

The sprite styles that OmniClient used were not even based on the original filer icons, and so they looked very out of place in the modern desktop. New icons were designed, using some of the regular drive icons as the base. The idea was to try to keep the filer style similar but recognisable. The style was pretty simple, and used different shapes for each of the types of devices.

ShareFS, AppleTalk, ImageNFS, LanManFS, LanMan98, NetFiler and NFS.

FTP

!FTP

The ANT !FTP tool was a little old, but it provided a quite nice interface for uploading and downloading files in a Filer-like manner. It also had an interesting interface to OmniClient, through OmniFTP which made it feel a lot more integrated. The module was intended to be included in the next release of the Operating System, along with the updated OmniClient.

Like the other ANT software, it had some odd ways of doing some things, which needed to be addressed before it could go into the main build. The strange fade operations were one example. The templates were also pretty ugly as they were, and needed to be realigned and buttons resized to match the standards. It was still quite a way from being ready to be used by real users; in particular it would crash if you restarted it - which I believed was due to some unclean freed memory. But it was getting there and could have been quite nice.

Marcel

!Marcel was never my favourite mail client. ANT's client worked reasonably well, and was the first (I think) on RISC OS to support MIME, but it was never my cup of tea - I preferred !Messenger. In any case, we only had the source to the main application. The support modules weren't buildable, so we couldn't really support it much.

It was mainly because of this that I didn't spend a lot of time working with it. I do remember, a few years before I got access to the source, pestering one of the ANT employees on their stand at a show because of their use of the 'c-client' library which came from the Pine mail tool. I never got very far with them over that, which isn't particularly surprising.

One of the interesting parts of Marcel was that it included a dynamic linker to load its components. I used to think that it was because of the dynamic linker that Marcel crashed quite a bit, but having used it quite a bit I'm not sure that that is the case. Aside from the use of DeskLib, I rather liked most of Nick Smith's ANT code. It was usually pretty readable, and easy to follow.

SockStats

!SockStats

In the very dim past, back when I was working on Doom, I think, I had written a few little experimental bits of code that would extract details of the Internet module's statistics and display them. This worked in the same way that *InetStat did, but the documentation for the statistics was pretty thin on the ground.

So when Chris Williams asked about the statistics, I had a nice example to give him, albeit very rough around the edges, which obtained the details. He fleshed the whole thing out and wrote a proper application that would display the details in a window. He also added details from the device drivers so that they could display details without needing to use the *ShowStat tool.

It was a pretty neat little tool, and it's another good example of how my

half-hearted little bits of code were made to actually do something useful

when I'd given up on them. ![]()

WebServe

!WebServe

Some of the work I did for Pace included some updates to !WebServe. I forget the requirements, and I'm not digging through the old documents, but I think the issue was that they needed a way to provide a service which could be accessed by clients to query the state of the system. I proposed a number of ways this could be done, from a module providing a network service in the background to an application that provided the results. The generalised solution was to provide a web server that could run configurable code - CGIs. !WebServe already existed, and so I suggested adding support for CGI to it.

So, that was what I ended up doing. !WebServe could support having programs run that were in the directory it served. It would execute them inside a TaskWindow, passing the necessary parameters to the task. I also proposed an addition to TaskWindow which would allow the TaskWindow to tell its controlling application that its buffer had filled up.

It was possible for the controlling application to tell the TaskWindow to pause its output, but not possible for the TaskWindow to do the same to the controlling application. This meant that it was possible for the TaskWindow to run out of memory, and be unable to tell the application to stop sending data to it.

The modifications weren't possible for the TaskWindow at the time, so the solution just had to work on the faith that the data sent would be small and wouldn't cause the buffers to overflow. As well as changes specifically for the CGI support, I also tidied up the code to make it cleaner to compile and more efficient. Prior to my changes, !WebServe would not even use SWI Wimp_PollIdle properly.

A few other bugs were fixed, as well. The application had been incorrectly displaying the 'disc full' icon due to an signed number problem. There were a few places where dates were used incorrectly, and those were fixed as well. Poor handling of nascent connections could have caused the application to run out of sockets, and was clearly visible in the user interface. Additionally, the application did not cope well if some of the Toolbox modules were not present, despite not actually requiring them to function.

The CGI support was quite functional, although there were a few security issues which were noted and not addressed. The CGI support differed from that of !NetPlex, which was a little unfortunate, but I had taken a different route in providing the CGIs, which required that they be able to run concurrently - each CGI had its own variable name space separate from other processes running, whereas with !NetPlex the CGI processes were expected to be within a single name space. !HTTPServ and !WebJames actually delegated the input socket to the CGI script, rather than processing the standard output. I didn't think that this was as safe, so went the TaskWindow route instead.

I was quite happy with !WebServe in the end. It did a good job and would try to keep everything tidy. It was more efficient than it had been, although there was still scope for improvement. The main disappointment was that it was at the mercy of the TaskWindow implementation, as there was no way to signal the buffer fills. I suggested an improvement to increase the size of the output buffer as well, as previously it would send data when 200 bytes had filled its buffer, leaving the remaining 56 bytes as unused. It wasn't a huge increase but it did improve the performance of some operations.

Disclaimer: By submitting comments through this form you are implicitly agreeing to allow its reproduction in the diary.